At the heart of the discussion of strategic decision-making is how to value each agent’s strategic position. In the theory of games, this is based on the notion of utility; the assumption is that each agent values the outcome of the decision independently. I carry over that notion of valuation into decision process theory. I assume that each agent or player can measure the utility of any given strategy by assigning a numerical preference. As in game theory, the player can also measure the utility of a mixture of utilities by thinking of the choice as assigning sequence of frequencies to each pure possibility and making choices with those frequencies over a sequence of plays. In either case the preferences are idiosyncratic: they go with the player who owns those choices.

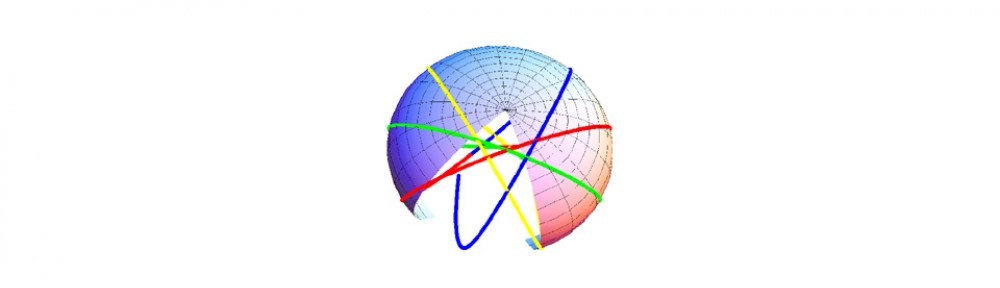

It is clear that preferences defined in this way provide a numerical position that is more than just a number for each strategy, it represents a physical attribute of the decision-making process. It is very much like a global positioning system for keeping track of positions on the earth; a fair amount of coordination is involved to relate one position to another. We get by with our GPS systems because this complexity is hidden inside our devices. Such a global positioning system is also used in theories of which decision process theory is a special case. My initial approach was to adopt the same strategy as used in such theories to define a positioning system. I have adopted what is called in the literature, harmonic coordinates to define the positions. It requires the definition of a scalar field for each strategic direction. This scalar field captures the physical characteristics of preferences associated with that direction. There are constraints on the behaviors of these scalar fields that arise from the theory that can be verified by detailed analysis of real world behaviors. The theory addresses the detailed coordination alluded to above.

Since many detailed examples are given in the white papers on this site, it may be helpful to put those numerical examples into a more general context. I suggested that a very large class of models, called stationary models in the literature of general relativity, is a useful class of models to study for decision-making. In these models, there is always a frame of reference in which the decision flows are stationary and the distance metric is independent of time. In the formal language of differential geometry, time is an isometry. I specialized to the case in which the flows are not only stationary but zero in this special frame, which I called the central co-moving frame. The detailed coordination described above however is absent in this frame.

To get an idea of why, consider an analog of a wave traveling in water. The coordination of interest is the behavior of the wave. In particular, if one generates a wave at a source, we want to know the behavior of all the subsequent ripples. There is nothing however that prevents us from viewing the wave from the perspective of an army of corks uniformly distributed and riding on the surface of the water. Each cork, from his point of view, is at rest (co-moving). The model assumption is that over time, the attributes of the water are constant for each cork (though in principle different for different corks). The cork doesn’t see anything of direct interest to us. Nevertheless, from the behavior of each cork, we gain spatial knowledge about the water. That knowledge can be used to reconstruct the ripple behavior if we add additional equations. The harmonic equations from differential geometry are just such additional equations. They take the spatial information from the co-moving frame and provide wave equations that depend on that spatial information to project the behavior of the ripples that might occur.